AI Failures Aren’t Instant. Why Performance Decays over time.

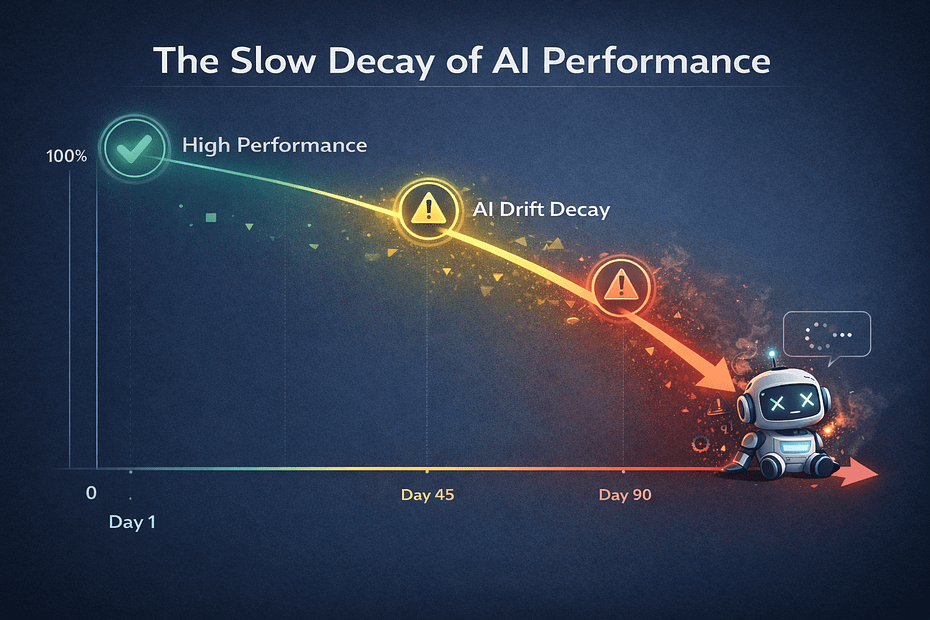

AI performance gets worse over time. It’s a problem most businesses don’t see coming. It is called AI drift, and it’s the single biggest reason AI projects that start strong end up being abandoned by business owners.

AI chatbots and voice agents are becoming standard tools for small businesses. They handle bookings, answer questions, and reduce support load. The setup is getting easier, the pricing is getting more accessible, and the results are impressive at first.

Three months after the launch of your chatbot that was booking 92% of appointments successfully, it is dropping to 83% for bookings. Your voice agent that handled customer questions smoothly now asks them to repeat themselves.

Nothing crashed. No error messages.

But, your bot just got worse, and nobody noticed until customers started complaining.

This is called AI drift, and it’s the single biggest reason AI projects that start strong end up abandoned.

If you’ve deployed AI or you’re planning to, understanding drift is the difference between an AI run bot that pays for itself, or one that becomes a liability.

This article breaks down what AI drift (sometimes called Model Drift) actually is, why it happens, and most importantly how to catch it before your customers do.

What is AI drift?

AI drift is the gradual decline in chatbot, voice and AI workflow systems performance after deployment.

Unlike traditional software that remains static, AI degrades as business rules update, or underlying models shift. This often causes “silent failures” where the system runs but delivers progressively worse results.

Here’s what that looks like in practice.

Your HVAC booking bot launched in January. Your customers loved it! Handling support tickets dropped 60% and your team celebrated. Project closed. Team moved on.

By April, something’s wrong.

Customers are repeating themselves. Response times doubled. Booking success rate dropped from 92% to 83%. But your dashboard shows green. No errors. No crashes.

Now your customers are angry.

They’re bypassing the bot entirely and calling support. The efficiency gains you celebrated three months ago are gone.

This is drift

It’s not a bug you can patch. Drift happens because of how AI actually works.

For example:

AI providers update models without warning. OpenAI pushes a new version of GPT. Anthropic updates Claude. Your prompt behaves differently overnight. You didn’t change anything but now your bot is giving wrong answers.

Seasonal patterns shift. Winter emergency calls sound different than summer service appointments. Same business, same services, different expectation of urgency.

Technical limitations compound problems. Long conversations can push critical instructions out of the context window. The bot “forgets” its own rules mid-interaction.

Even if your business never changes, your AI will still drift. It’s trained on a snapshot in time and deployed into a world that keeps evolving.

Most businesses treat AI like traditional software: deploy it once, let it run.

But, you can’t do this with AI.

Why AI Performance Drops After Launch

Even before you understand what’s happening, you can see the symptoms.

Your vet clinic bot quotes the wrong hours because the summer schedule ended six weeks ago.

Your auto shop assistant recommends the same service twice in one conversation.

Your plumbing voice agent asks customers to describe their flooded basement in detail instead of routing them to emergency dispatch.

Nothing crashes. Everything technically works. But the output is wrong, outdated, or irrelevant.

Task completion rates slip week by week. Token usage climbs because the bot needs three exchanges to accomplish what used to take one. Customers abandon mid-conversation and call support instead.

Long conversations expose a different problem

In unmanaged deployments, or without active maintenance, your chatbot or voice agent started strong but lose coherence eight minutes in. It forgets its own rules mid-call.

It could:

Break character

Fabricate information

The context window fills up.

Critical instructions got pushed out by conversation history.

This isn’t decay over time, it’s a technical ceiling that reveals itself in extended interactions and compounds the problem.

This happens consistently across AI deployments.

Within 60 to 90 days of launch, most chatbots and voice agents start showing obvious performance problems. Three months from setup to customer complaints.

What does AI drift actually mean?

It isn’t a malfunction.

It’s the gap between what your AI learned when it was built, and what you need it to do today.

Traditional software let’s you know when it breaks with error messages, stack traces, and ‘500’ error messagess. When something fails and you know immediately.

AI degrades quietly and responses get slower, and answers can become wrong or outdated. Your bot starts doing things it shouldn’t and quality erodes before you notice anything breaking.

What you need to understand is that AI workflows require updates on a regular basis.

The Root Causes: How Small Problems Compound

Understanding drift means understanding how problems compound.

Data changes trigger concept changes. Prompt patches create new conflicts. System updates break integrations. Each problem creates the next one.

Let’s have a look at what might happen in real life:

Data Drift:

You add a new service to your website but forget to update what your bot knows about. Customers start asking about installations and your bot has no idea what they’re talking about because it was only trained on repair services. Or you expand your service area to cover three new towns and customers get told “we don’t service your area” even though you do now.

Pricing changes, seasonal offerings, warranty terms update – every time your business evolves, your chatbot is still operating on old information unless someone manually updates it.

Data drift triggers concept drift:

This is when the relationship between inputs and correct outputs changes. Your refund policy extends from 14 days to 30 days. The bot still operates on old rules because the world changed but the bot prompt wasn’t changed to reflect this new truth. A customer calling on day 20 gets told they’re out of luck and they get angry when they are refused a refund.

New services launch. Old ones get deprecated. Warranty terms change. Each update makes the bot’s knowledge progressively stale.

Prompt drift:

Five people on your team tweaked the system prompt to handle edge cases but no one told the bot. Now your chatbot or voice agent rambles on because these accumulated changes pulled it in conflicting directions.

One person added verbosity to seem friendlier. Another added disclaimers for legal coverage. Someone else added examples to reduce confusion. The prompt ballooned from 200 words to 800 words and it’s response quality degraded because the instructions became contradictory.

Knowledgebase drift:

This also creates contradictions. You updated documentation, deprecated old policies, or added new products. Your retrieval system now pulls from current and outdated sources simultaneously. The bot returns conflicting information because it can’t distinguish which document is correct.

System drift:

The software you use for your booking API changed its response format. Maybe your calendar integration updated its authentication method. If components shift independently your bot’s instructions for calling these tools become progressively wrong.

WOOPS!

One broken integration stalls everything. The bot successfully collects booking information, calls the calendar API with outdated parameters, fails silently, and tells the customer “you’re all set” while nothing actually got scheduled.

The Cascade

data shifts → outputs drift → prompts get patched → knowledge conflicts → tools break. Small changes compound into system-wide degradation. Each fix creates new surface area for the next failure.

Why Companies Don’t Catch AI Drift

DIY projects fail hardest here because there’s no monitoring tied to business outcomes.

You’re tracking response times when you should be tracking booking success and customer satisfaction. Without prompt versioning every edit overwrites the last one and you can’t roll back to the original.

The next big problem is that nobody’s watching.

The project is “done” when the bot launches, the budget’s spent, the team moves on, and the person who built it is working on something else. Not “if” but “when” drift happens, there’s no one who knows how to fix it.

Without baseline documentation and clear KPIs, what “good” looked like on day one, leaves no clear definition of “correct” for AI outputs in a few months time. A “good” answer this week becomes “acceptable” next week and becomes “frustrating” next month. Nobody catches the slide because nobody defined the standard.

This is why competent AI providers don’t just hand you a bot and disappear.

The Hidden Cost in Lost Revenue vs. The Costs In Ongoing Maintenance

When businesses say ‘AI doesn’t work,’ it gets blamed on the technology instead of the lack of maintenance. The problem isn’t the AI setup – the problem was treating it like software you set up once and forget about.

Failed bookings add up fast. Customers abandon conversations when the bot can’t help them. Token costs climb because the degraded bot takes three exchanges to accomplish what used to take one and you’re paying more for worse results.

Customer trust erodes and they stop using the bot entirely, then they tell other customers to just call instead.

Here’s what this looks like for a typical HVAC company:

Booking success drops from 92% to 83% (9 percentage points)

At 50 bookings per week, that’s 4-5 failed bookings weekly

At $150 average service call = $30,000-$37,500 quarterly loss

Annual revenue loss: $120,000-$150,000

Compare that to maintenance costs: weekly monitoring and monthly reviews run about $400/month or roughly $4,800 annually. You’re spending 3-4% of the loss to prevent it.

How to Catch AI Drift Problems Before Customers Do

Systematic practices catch cascade effects early and contain them before they compound.

This doesn’t stop external changes. Providers will still update models. But versioning lets you respond with controlled adjustments instead of emergency patches that introduce new problems.

When pass rates drop from 96% to 89%, you catch the issue in week three instead of month three. The fix is smaller because the problem hasn’t compounded yet. You see the first domino fall and stop the chain reaction.

Task completion below 85% requires investigation. Token usage per successful interaction up 30% means the bot is working harder for the same result. Customers abandoning conversations and calling support instead means trust is breaking.

Real example:

A plumbing company’s voice agent passed automated tests, but call recordings showed it asking flooding victims to describe the problem in detail. Baseline testing with emergency scenarios would have caught the issue at week six, before any customer experienced it.

Maintaining 94% Accuracy: What Success Looks Like

Your HVAC booking bot launched in January.

By April, it’s still operating at 94% booking success. Token usage per successful booking stayed flat. Customer satisfaction held steady.

Not because drift didn’t try to happen. Because it was caught early and contained.

February: baseline testing flagged data drift after booking patterns shifted toward more urgent and same-day requests. The model was updated using recent interactions. Pass rates recovered. Customers never noticed a problem.

March: a provider model update changed response patterns. Prompt versioning surfaced the issue immediately. The team rolled back, tested adjustments in staging, and deployed safely. No customer impact.

Late March: a new service launched. The knowledge base was updated and the retrieval index rebuilt before announcement. Testing confirmed accuracy before customers asked their first questions.

April: a scheduled review caught tone drift. Responses were correct but increasingly formal. A prompt adjustment restored brand voice before trust eroded.

Final Thoughts

AI projects don’t usually fail because the technology stops working. They fail because performance quietly degrades while no one is watching. Drift doesn’t announce itself with errors or outages. It shows up as missed bookings, longer conversations, confused customers, and lost trust. By the time complaints surface, the damage is already done and the AI gets blamed for a failure that was really operational neglect.

The difference between AI that pays for itself and AI that gets abandoned isn’t better models or more features. It’s whether someone is actively measuring outcomes, reviewing behavior, and making small corrections before those issues compound. Teams that catch problems early, sometimes as early as the third week, never experience the sharp drop-off that forces a rethink. They maintain performance, protect revenue, and preserve confidence in the system.

AI isn’t a set-and-forget tool. It’s an operational system that lives inside a changing business. The businesses that succeed with AI are not the ones that launch and move on. They’re the ones that keep watching, keep testing, and keep performance where it needs to be long after the launch celebration is over.

Thank you for reading. If you have any questions, just ask “Nova”, the chatbot➡️

Booking success drops from 92% to 83% (9 percentage points)

Booking success drops from 92% to 83% (9 percentage points)